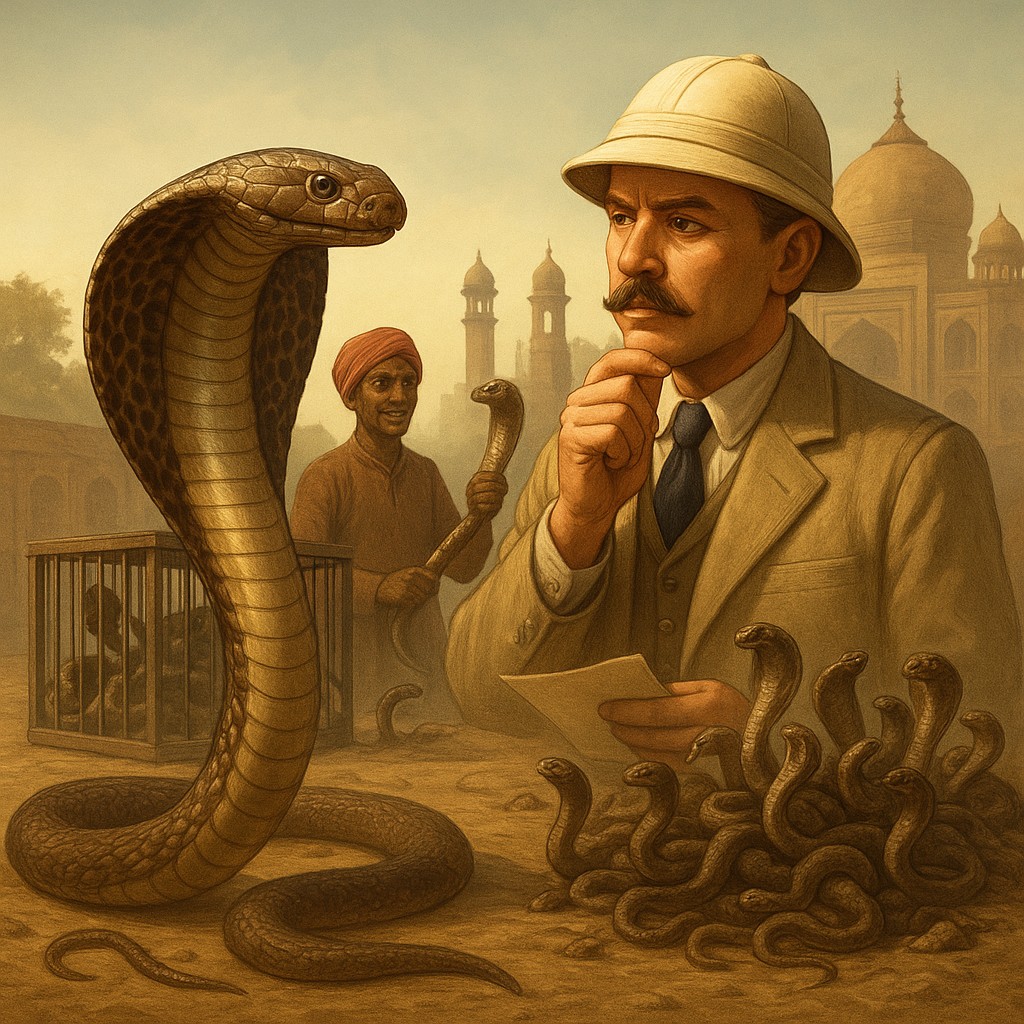

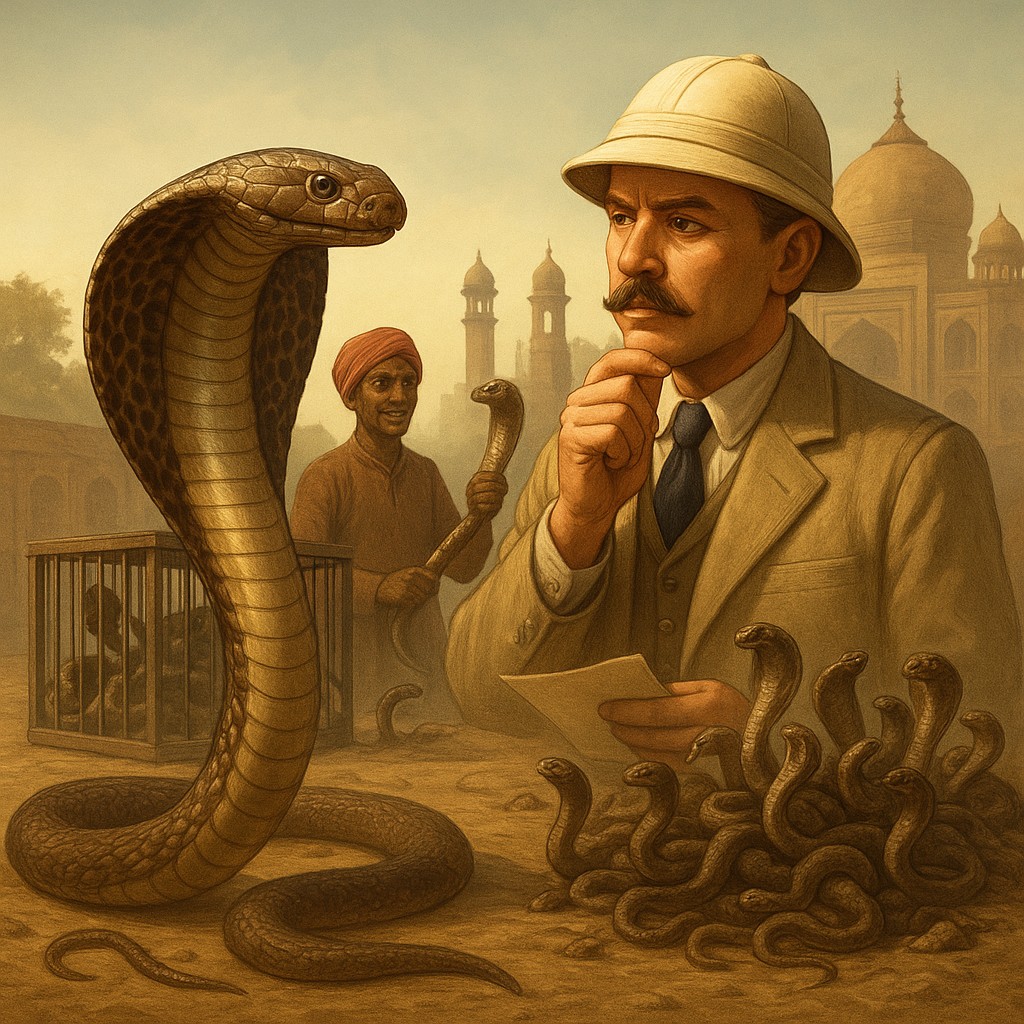

Imagine for a moment that you are a British colonial official in Delhi, India. Your city is overrun with venomous cobras, and the local people are living in constant fear. In what you believe is a stroke of genius and efficiency, you decide to use the power of the free market to solve the problem. You announce a bounty: for every dead cobra brought to the government office, the citizen will receive a generous cash reward. At first, the plan is a total success. Piles of dead snakes appear, the number of wild cobras seems to drop, and you congratulate yourself on being a master of social planning.

However, humans are remarkably clever, especially when a paycheck is at stake. Local entrepreneurs soon realized that hunting wild, dangerous cobras in the brush was much harder than simply breeding them in their own backyards. A small-scale industry of snake farming emerged, where people raised cobras specifically to kill them and collect the reward. When the government eventually caught wind of the scam and canceled the bounty, the breeders were left with thousands of worthless, venomous snakes. Since they were no longer making money, they did the only thing that made sense for their own pockets: they opened the cages and let the snakes go. The end result was a city with many more cobras than when the program started. This is known as the "Cobra Effect," and it serves as a warning to anyone who thinks they can change the world with a simple bribe or a threat.

How "Perverse Incentives" Work

At its heart, the Cobra Effect is about more than just snakes; it is about the gap between what we say we want and what we actually reward. In the worlds of economics and policy, this is called a "perverse incentive." This happens when a reward or punishment is set up in a way that accidentally encourages the opposite of what was intended. It occurs because people do not just follow the spirit of a law; they follow the easiest path to get the most benefit for themselves. When we create a rule, we are essentially setting the "rules of the game," and people will play to win, even if that "win" ruins the original goal.

This problem stems from "linear thinking," where we assume that action A will lead directly to result B. If we want fewer snakes, we pay people to kill snakes. It sounds logical. However, the real world is made of complex systems that adapt to changes. These systems have many moving parts that react to their surroundings. When you drop a new incentive into a human society, you aren't just pushing a button. You are adding a new factor that every person will try to use for their own gain. If the reward is high enough, it can warp the entire system, creating feedback loops that the person who made the rule never imagined.

The tragedy of the Cobra Effect is that it usually starts with good intentions. No official sets out to increase the number of snakes in a city. They want to solve a serious problem. However, by focusing on a "stand-in measurement" (the dead snake skin) rather than the actual problem (the wild snake population), they invited people to cheat. In any system, if you measure success by a specific number, people will find a way to move that number without actually fixing the problem the number was meant to represent.

Examples from Around the World

History is full of examples where leaders accidentally created their own versions of the cobra problem. During the French colonial era in Hanoi, Vietnam, a similar situation happened with rats. The city was crawling with rodents, so the government offered a bounty for rat tails. Before long, officials began seeing plenty of rats running around without any tails. The citizens were catching the rats, cutting off their tails for the money, and releasing them back into the sewers to breed. It was a great business model for the residents, but a disaster for the city’s health.

We see this in the corporate world all the time today. Imagine a software company that pays its programmers based on how many "bugs" (errors) they find and fix in their code. It sounds like a great way to ensure quality. In reality, the programmers might start writing messy code on purpose, leaving easy errors they can "discover" later to get a bonus. The measurement (bugs fixed) becomes the goal, rather than the intended result (stable software). The incentive actually creates more errors than it fixes.

Even environmental laws can fall into this trap. In some places, governments try to cut carbon emissions by offering subsidies for "renewable" fuels. However, if these rules aren't careful, they can lead to farmers cutting down natural rainforests to plant the crops used for those fuels. In an effort to save the planet, the policy accidentally destroys the world's most important natural air filters. This "leakage" effect is a direct cousin of the Cobra Effect: focusing on one small number causes massive damage elsewhere.

Comparing Intent vs. Actual Results

To see how these rewards turn from helpful to harmful, let’s look at some classic examples. This table shows how a "metric that can be gamed" often replaces the "real goal."

| Original Problem |

Proposed Reward/Rule |

Intended Result |

The "Cobra" Result |

| Too many cobras |

Bounty for snake skins |

Fewer snakes in the city |

Snake farming and more total snakes |

| Too many rats |

Bounty for rat tails |

A rat-free city |

Tail-less rats breeding in sewers |

| Poor medical care |

Pay doctors for every patient seen |

Faster treatment |

Shorter, lower-quality visits to boost numbers |

| High air pollution |

Driving limits based on license plates |

Fewer cars on the road |

People buying second, cheaper, dirtier cars |

| Slow customer service |

Target for shortest phone calls |

Efficient problem solving |

Staff hanging up on customers with hard problems |

Why We Fall for the Trap

You might wonder why smart people keep making these mistakes. Why don't we see the snake breeders coming? The answer lies in "Goodhart’s Law," which says: "When a measure becomes a target, it stops being a good measure." As soon as we tell people we are judging them by a specific number, that number stops reflecting reality. This is because humans are "utility maximizers." We are wired to find the easiest way to get what we want with the least effort. If you offer $10 for a snake skin, I could spend all day in the dangerous jungle, or I could spend five minutes in my backyard shed. My brain naturally chooses the shed.

There is also a mental gap known as "Bounded Rationality." None of us can see the future perfectly. When a government makes a policy, they look at the current problem and try to fix it. They often ignore "second-order effects," which are the consequences of the consequences. They see step one: (1) Pay for snakes -> (2) People kill snakes. They miss the next steps: (3) People breed snakes for money -> (4) Government stops paying -> (5) People release the snakes. To avoid this, you have to think like a chess player, looking several moves ahead to see how a clever opponent might react.

Finally, there is "Confirmation Bias." Once a policy is active, leaders want to believe it is working. If the bounty office collects 1,000 skins in a week, they record that as 1,000 fewer snakes in the wild. They are so focused on their own success that they ignore rumors of snake farms. They see what they want to see. Without a way to admit failure, these bad incentives can last for years, doing damage long before anyone admits the plan was broken from the start.

Designing Better Systems

How do we stop breeding cobras? The first step is to stop using "stand-in metrics" and focus on "total outcomes." Instead of paying for individual snake skins, perhaps the government should have hired a professional, state-run team that was paid a salary to keep the city safe. When the hunters don't get paid per snake, they have no reason to breed them. We must connect rewards to the long-term health of the system rather than a short-term number.

Another strategy is "Red Teaming." Before a new rule or goal is launched, you should try to break it. Ask yourself, "If I were lazy or greedy, how would I use this rule to get the reward without doing the real work?" If you set a goal like "I will spend one hour at the gym every day," the lazy version of you might just sit on a bench and look at your phone. You "met the goal" but failed the purpose. To fix this, you might change the goal to something harder to fake, like a specific heart rate or a weight-lifting milestone.

Lastly, we must accept that plans need to change. No plan survives its first encounter with the public. A well-made incentive should include a "sunset clause," a date when the rule expires or must be reviewed to check for unintended consequences. By admitting we can't predict everything, we leave room to change course before the snake cages are opened. It takes humility to admit a "bright idea" might be making things worse, but that humility is the only thing that stops a small problem from becoming an infestation.

Thinking Ahead

As you go through your day, whether you are managing a team, setting goals for your kids, or trying to keep a New Year’s resolution, remember the cobras of Delhi. We live in a world driven by incentives, but we must lead them, not follow them blindly. When you reach for a quick fix or a simple reward for a complex problem, stop and think about the next steps. Are you encouraging the behavior you actually want, or are you just giving someone the tools to build a snake farm?

The Cobra Effect isn't a reason to stop trying to improve things, but it is a powerful reminder that human cleverness can work for or against us. When we respect how complex the world is, we stop looking for "magic bullets" and start looking for thoughtful, lasting changes. By focusing on the spirit of our goals rather than just the numbers, we can create a world where honesty pays better than cheating. The next time you see a problem, don't just throw money at it. Look at the big picture and make sure your solution doesn't have a venomous bite of its own.