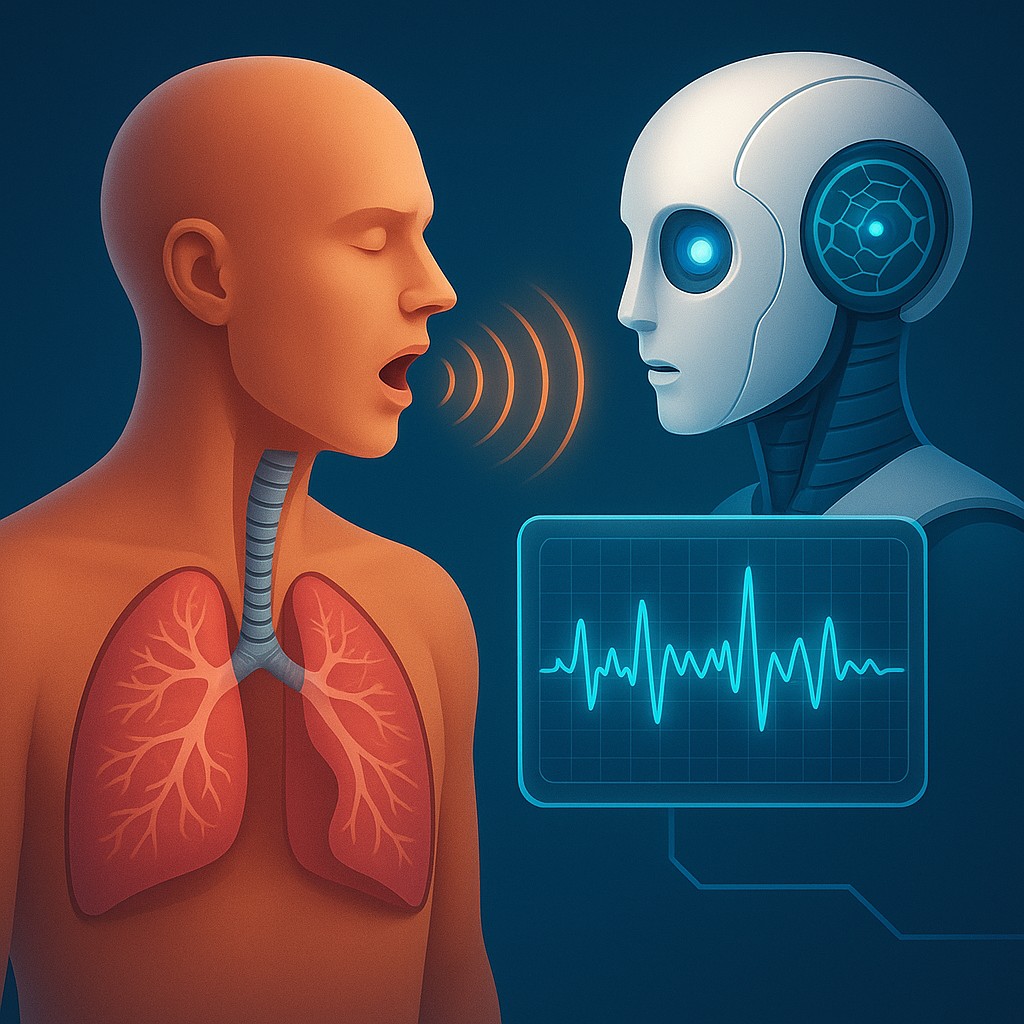

Imagine walking through a busy train station or a crowded airport. You hear the familiar soundtrack of city life: the hum of engines, the murmur of travelers, and the occasional sharp bark of a cough. To most of us, that cough is just background noise or a cue to step away from a stranger. But to a new wave of global health technology, that sound is a data packet. It carries a unique signature of air movement, vocal cord tension, and lung congestion that artificial intelligence can decode.

We are entering the era of acoustic epidemiology, a field that treats the collective sounds of a city as a biological sensor. By analyzing thousands of coughs in real time, public health agencies are beginning to spot the "shadow" of an outbreak before the first patient even thinks about calling a doctor. This marks a shift from reactive medicine, where we wait for people to feel sick enough to seek help, to proactive monitoring, where the very air tells us when a new virus has arrived in town.

The Mechanical Orchestra of the Human Airway

To understand how a machine "hears" a disease, we first have to realize that a cough is not just a random noise. It is a highly coordinated, high-speed physical event. When you cough, your body builds up immense pressure against a closed glottis (the part of the throat containing the vocal cords), which then snaps open to blast air out at speeds approaching 500 miles per hour. This violent rush of air hits everything in its path, including mucus, inflamed tissue, or narrowed bronchial tubes. Each of these conditions changes the "timbre" or quality of the sound, much like air sounds different when blown through a flute versus a tuba.

A bacterial infection like pneumonia often creates "wet" coughs because the lungs are filled with fluid and debris. This fluid adds a heavy, damp resonance to the sound. On the other hand, viral infections like COVID-19 or the common flu usually result in a "dry" cough caused by irritation and swelling rather than excess fluid. While the human ear might struggle to tell the difference between fifteen types of dry coughs, machine learning models can break the audio down into a spectrogram, which is a visual map of all the sound frequencies involved. In this map, a "dry" viral cough looks nothing like a "crunchy" asthmatic wheeze or the "hollow" bark of croup.

The real breakthrough lies in the timing of these noises. A machine does not just hear the "cough" sound; it measures how long the initial blast lasts, the length of the silence that follows, and the tiny vibrations of the vocal folds. These data points are then compared against massive databases of thousands of verified medical recordings. By spotting these patterns, acoustic sensors can flag a specific respiratory signature spreading through a neighborhood, acting like a radar system that detects a pandemic in its infancy.

Privacy Through the Lens of Pure Physics

One of the most common concerns about public health surveillance is the fear of being watched or identified. However, acoustic epidemiology offers a unique solution to this privacy dilemma because it focuses on the mechanics of sound rather than the identity of the person. Modern health sensors use "edge processing," meaning the audio is analyzed locally on the device and then immediately deleted. The device does not save a recording of your conversation; it simply extracts the mathematical features of a cough and sends a tiny packet of anonymous data to a central server.

This shift from "who is speaking" to "how the air is moving" is a fundamental change in how we think about data. In these systems, your voice is treated as a physical event, much like how a seismograph measures an earthquake. The software can be programmed to ignore human speech entirely, focusing only on the specific frequency ranges where coughs occur. This ensures that while a health department might see that a certain type of cough is increasing in a specific zip code, they have no way of knowing which person made those sounds. This layer of abstraction allows for city-wide data collection without the need for intrusive facial recognition or GPS tracking.

| Feature |

Human Ear Perception |

Acoustic AI Detection |

| Frequency Range |

Limited to the audible spectrum |

Can analyze high-frequency micro-harmonics |

| Volume Sensitivity |

Subjective and easily drowned out |

Can filter out background noise to focus on the signal |

| Classification |

General (Wet vs. Dry) |

Specific (Viral, Bacterial, Chronic, or Allergic) |

| Memory |

Relies on short-term memory of a few hours |

Can compare sounds across millions of data points |

| Consistency |

Affected by fatigue or bias |

Perfectly consistent 24/7 |

Deciphering the Visual Map of Disease

If you looked at a recording of a cough on a computer screen, you would see a complex wave of peaks and valleys. This is where "feature extraction" takes place. Researchers look for specific markers, such as Mel-Frequency Cepstral Coefficients (MFCCs). These are mathematical "fingerprints" of a sound's power spectrum. By training a computer network on these fingerprints, engineers can teach a smartphone app to recognize the subtle difference between a smoker's cough and a cough caused by a brand-new virus.

This technology is currently being used in many environments, from hospital waiting rooms to remote villages. In areas where expensive lab tests are hard to get, a health worker can use a basic smartphone to record a patient's breathing and get an instant assessment of whether they likely have Tuberculosis (TB). TB has a very specific sound signature because of the way it creates cavities in lung tissue. This "acoustic triage" allows doctors to decide who needs a high-cost lab test most urgently, making sure limited resources are used effectively.

Beyond individual diagnosis, the ultimate goal for global health agencies is "passive monitoring." Imagine a sensor in a pharmacy or a school hallway that simply counts the number and type of coughs it hears. If the "cough density" in a school spikes on a Tuesday, and the signature matches a known flu strain, health officials can warn parents and local clinics before hospitals are overwhelmed on Friday. This four-day head start can be the difference between a small local cluster and a runaway community outbreak.

Challenges in a Noisy World

Despite its potential, this technology is not a magic wand. The real world is incredibly loud. An acoustic sensor in a busy subway station has to deal with screeching brakes, slamming doors, and the voices of hundreds of people. Spotting a single half-second cough in that environment is like looking for a specific grain of sand on a beach. Developers are currently working on "noise-robust" algorithms that use secondary microphones to cancel out background noise, similar to the technology in high-end headphones.

There is also the challenge of "demographic drift." A cough from a six-year-old child sounds very different from that of an eighty-year-old man, even if they have the same virus, because their lung capacities are different. To be effective, the AI must be trained on a broad range of "acoustic profiles" that account for age, sex, and even location, as humidity and altitude can change how sound travels. Avoiding bias is crucial; if an algorithm is only trained on recordings from young adults in one country, it might fail to detect an outbreak in an older population somewhere else.

The final hurdle is public perception. Even if the technology is anonymous, the idea of "listening" sensors can feel uncomfortable. This is why transparency is vital. Health agencies must prove that the data is used for the public good and that the machine only "hears" the physical mechanics of illness. When people understand that this technology is more like a weather station for germs than a wiretap for secrets, it becomes much easier for the public to accept.

A Future Where the Air Tells a Story

The move toward acoustic epidemiology represents a broader shift in how we deal with the invisible world of microbes. For centuries, we were blind to outbreaks until they were already devastating our communities. With the discovery of germs and lab testing, we can now see the enemy, but only if we look through a microscope at a specific sample. Now, by using sound and machine learning, we are developing a "sense of hearing" for the health of our entire environment.

This evolution turns every smartphone into a potential tool for global safety. In the future, your phone might quietly notify you that the cough you have had for two days is a 90 percent match for a common seasonal virus, suggesting you stay home to rest before you even feel "sick" enough to go to bed. It turns the collective, often annoying sound of a coughing crowd into a protective shield, allowing us to listen to the whispers of our own biology.

As we refine these tools, the goal is not just to detect disease, but to better understand our shared respiratory health. We are learning that our bodies communicate in ways we never fully realized, using the physics of sound to signal distress long before our words do. By tuning our technology to these frequencies, we are creating a more resilient world, one where the next pandemic might be stopped not by a needle, but by the simple act of listening carefully to the air around us.