To grasp the current revolution in data center design, we must stop thinking of air as a friendly, invisible presence and see it for what it is: a stubborn thermal wall. For decades, the formula for computing was simple. You packed transistors onto a silicon wafer, ran electricity through them, and blew a fan over the result. This worked because air - despite being a poor conductor of heat - was free and easy to move. We simply built bigger rooms and louder fans, confident that as long as we kept the breeze moving, the heat would find its way out. But as we enter the era of massive artificial intelligence and high-density processing, we have hit a physical limit where the wind is no longer enough.

Modern processors now generate so much heat in such a small space that cooling them with air is like trying to put out a bonfire by blowing through a straw. Air is essentially an insulator; you take advantage of this every time you wear a puffy jacket to trap body heat. When 72 powerful GPUs (graphics processing units) are packed into a single server rack, the "puffy jacket" of air surrounding the chips becomes a danger. Heat density has reached a point where air literally cannot move fast enough or soak up enough energy to keep the silicon from slowing down or, in extreme cases, melting into a puddle of glass. This has forced engineers to turn to a solution long used in high-end gaming PCs and heavy machinery: the cooling power of liquids.

The Physics of the Thermal Ceiling

The core problem with air is its low "volumetric heat capacity," or its inability to hold much energy. If you compare a cubic meter of air to a cubic meter of water, the water can hold about 3,200 times more heat for every degree the temperature rises. In a data center, this means that to move the same amount of heat, you either need a small amount of liquid or a terrifying hurricane of air. As chips move toward drawing 700 or even 1,000 watts of power each, the volume of air needed to cool them simply won't fit inside a standard server box. You would need fans so powerful they would use more electricity than the chips themselves, creating noise loud enough to shake the building apart.

Furthermore, air is chaotic. It creates swirls and stagnant pockets that lead to "hot spots" inside a server. Even if the room feels cold, a corner of a circuit board might be simmering because a cable is blocking the breeze. This inconsistency forces data centers to over-cool their entire facilities, wasting massive amounts of energy on air conditioning just to make sure the stubborn corners stay safe. We are moving away from this "flood the room" approach toward a more surgical method: grabbing the heat right at the source.

Direct Contact and the Cold Plate Strategy

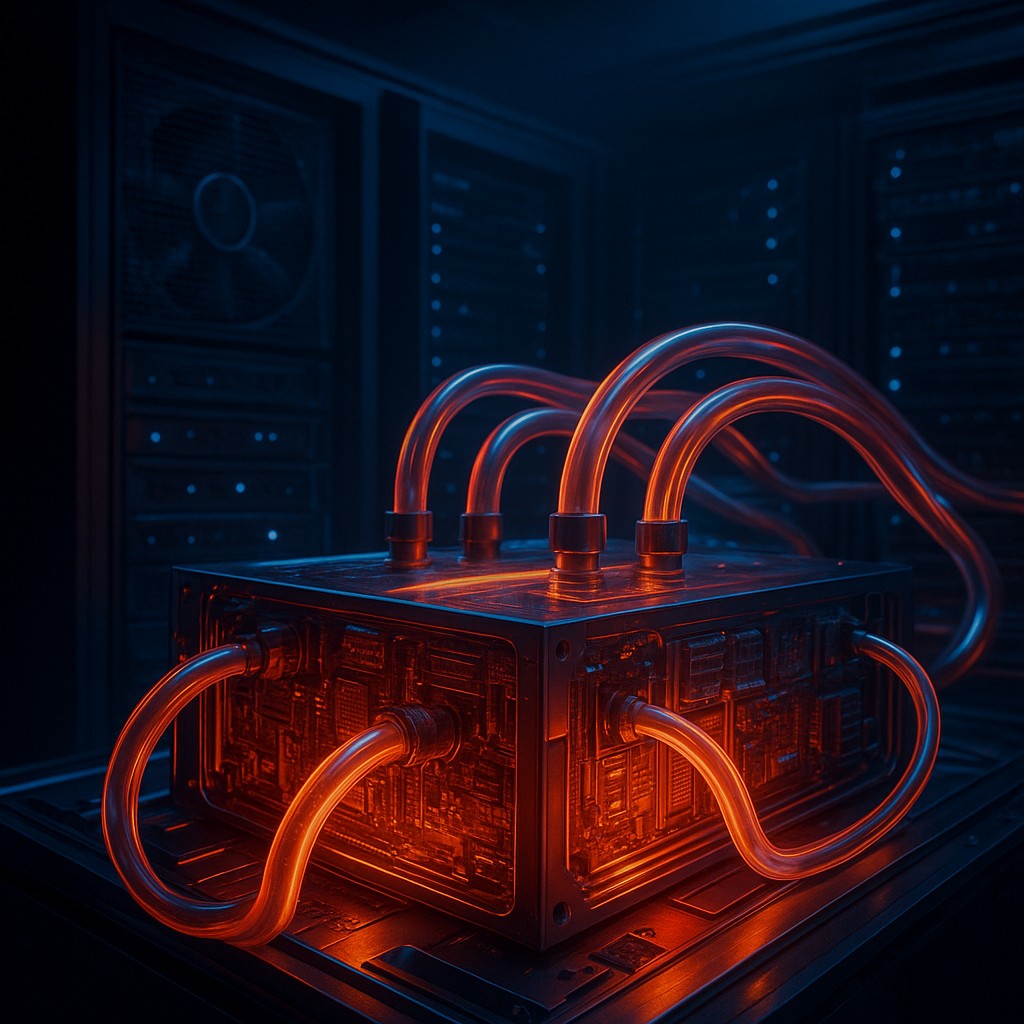

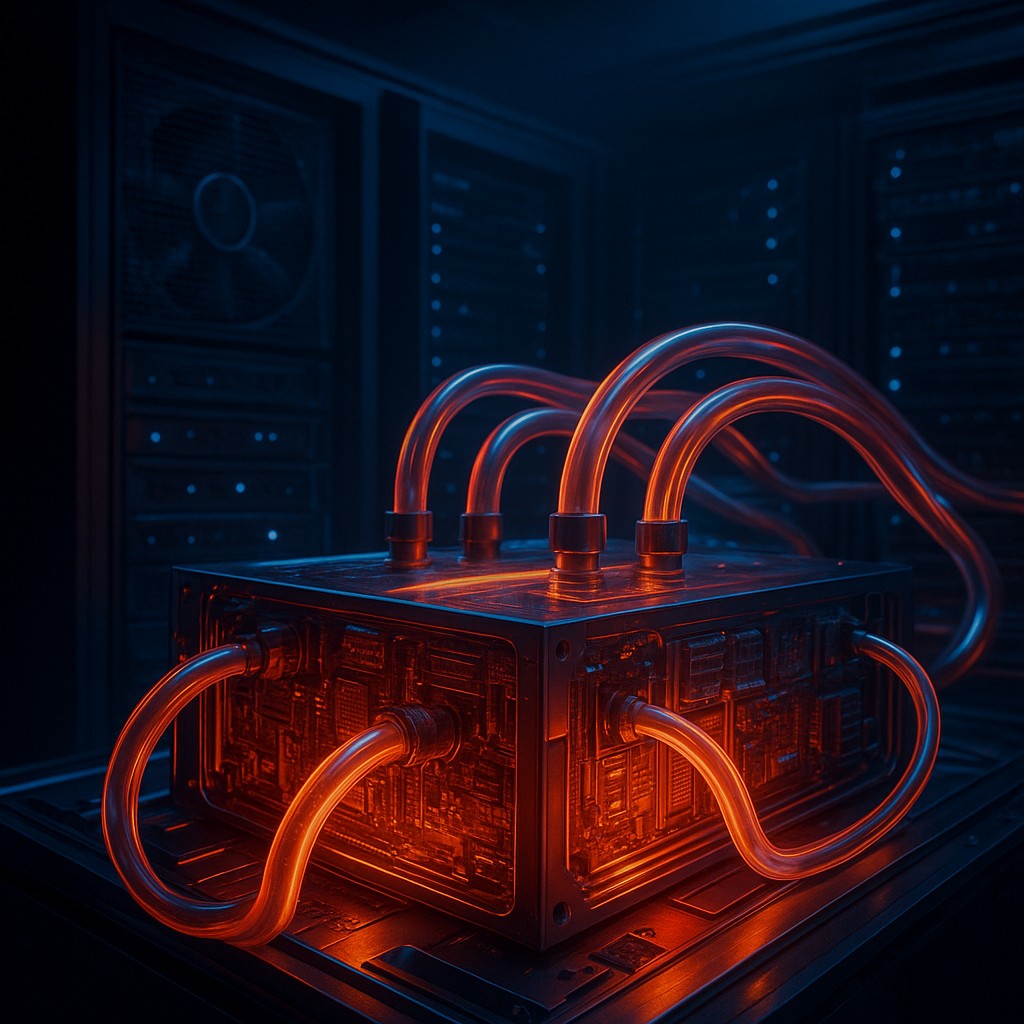

The most immediate shift in the industry is the move to "direct-to-chip" cooling, often called cold plate technology. In this setup, the traditional metal fins and fans are replaced by a sealed metal block, usually made of copper. Inside this block is a maze of tiny channels. A pump circulates a liquid - usually water or a specialized coolant - directly through these paths. Because the liquid sits so close to the silicon, it whisks away heat with incredible efficiency. This allows manufacturers to pack chips much closer together, as they no longer need "breathing room" for airflow.

This change turns a server rack from a collection of fans into a complex plumbing system. Each server connects to a "manifold," a central pipe that hands out cool liquid and collects the warmed fluid to be sent to a heat exchanger. The beauty of this system is that it can be a closed loop. The water touching the chip doesn't have to be the same water that goes to the cooling tower on the roof. By using a heat exchanger, we can transfer energy from the "clean" internal loop to an "industrial" external loop, keeping sensitive electronics safe from dirt while dumping the heat outside.

The Radical World of Immersion Cooling

While cold plates are a smart evolution, immersion cooling is a total reimagining of the computer. In this scenario, we scrap the pipes and plates and simply dunk the entire server into a vat of liquid. This isn't tap water, which would cause an immediate short circuit. Instead, data centers use "dielectric fluids" - engineered liquids that do not conduct electricity but are fantastic at moving heat. There are two main ways to do this: single-phase and two-phase immersion.

In single-phase immersion, the fluid stays a liquid. It is pumped through the tank, flowing over every component and processor, and then sent to a cooling unit. In two-phase immersion, the fluid has a very low boiling point. As the chip heats up, the liquid around it actually boils, turning into vapor bubbles. This "phase change" from liquid to gas absorbs a massive amount of energy - far more than just heating up a liquid. The vapor rises to the top, hits a cooling coil, turns back into a liquid, and rains back down. It is a silent, self-sustaining ecosystem that looks more like a high-tech aquarium than a computer room.

| Feature |

Traditional Air Cooling |

Direct-to-Chip (Cold Plate) |

Immersion Cooling |

| Cooling Medium |

Room Air |

Water or Specialty Coolant |

Dielectric Fluid |

| Efficiency |

Low (Air is an insulator) |

High (Direct contact) |

Ultra-High (Full contact) |

| Space Density |

Low (Needs air gaps) |

Medium-High (Dense racks) |

Maximum (Ultra-dense) |

| Infrastructure |

Large AC units / Fans |

Complex Plumbing / Pipes |

Liquid Vats / Tanks |

| Noise Level |

Very High (Dozens of fans) |

Moderate (Pumps) |

Near Silent |

Rethinking Infrastructure and Maintenance

Swapping air for liquid isn't as simple as replacing a fan with a pipe. It requires a fundamental redesign of the data center's "skeleton." Standard floors are built to hold the weight of racks, but they aren't always piped for high-pressure water. Running a "wet" facility means installing leak sensors, heavy-duty pumps, and filters to ensure no microscopic debris clogs the tiny channels in a cold plate. It also changes how technicians work. If a server in an immersion tank fails, you don't just slide it out; you have to lift it from a bath, let it drip dry, and then perform the repair.

There is also the matter of the coolant itself. While water conducts heat well, it is the natural enemy of electronics if a leak happens. Many providers are moving toward non-conductive fluids even for cold plate systems to lower this risk. These fluids are more expensive than water and require careful handling. However, the payoff is a much better Power Usage Effectiveness (PUE) ratio - a measure of efficiency. Because liquid cooling is so effective, it slashes the "overhead" electricity used for cooling, allowing more of the power bill to go toward actual computing.

Misconceptions About Liquid and Humidity

A common myth is that bringing liquid into a data center will create humidity and cause rust. In reality, these are closed systems. The water in a pipe or the fluid in a tank does not evaporate into the room. By moving heat through liquids, we actually gain better control over the environment. In air-cooled centers, massive AC units must constantly fight to keep humidity perfect - not too dry (which causes static) and not too damp (which causes condensation). Liquid systems remove this struggle because the heat stays trapped in the loop rather than being dumped into the room's air.

Another misconception is that liquid is more dangerous than air. While a leak is a valid concern, the failure rate of thousands of tiny, high-speed fans is quite high. Fans break, collect dust, and vibrate, which wears down parts over time. Liquid systems have fewer moving parts at the server level. A single, high-quality industrial pump is often more reliable and easier to watch over than five hundred small fans spinning at 10,000 RPM. As the industry grows up, the "fear factor" of liquid is being replaced by the realization that it is a more stable way to manage energy.

The Future of "Waste" Heat

One of the most exciting parts of this shift is what we can do with the heat once it leaves the chip. When you cool a data center with air, the heat is spread out into a large volume of air, making it "low-grade" heat - warm, but not hot enough to be useful. However, because liquid cooling captures energy so efficiently, the water coming out of a rack can be quite hot, sometimes reaching 60 degrees Celsius (140 degrees Fahrenheit).

This "high-grade" waste heat is a valuable resource. Forward-thinking cities are exploring heat-reuse projects where hot water from a data center is piped directly into a local heating system to warm nearby apartments or greenhouses. Instead of being a waste product we pay to get rid of, the heat from our digital lives becomes a utility. The data center becomes a dual-purpose building: a hub for cloud computing and a thermal power plant for the community. This circular economy is only possible because liquid allows us to catch heat in a concentrated, movable form.

Mastering the Fluid Frontier

The move to liquid cooling is a testament to our drive for performance. We have essentially outgrown the Earth's atmosphere as a cooling medium for our most advanced machines. By embracing the density of liquids, we aren't just solving a technical glitch; we are unlocking the next stage of human calculation. This shift requires us to think like plumbers, physicists, and environmentalists all at once. It reminds us that no matter how "virtual" our world feels, it is always tied to the rigid laws of physics.

As you look at your smartphone or laptop, remember that the "thin air" cooling them is a luxury of the past for big industry. We are entering an era where high-performance computing will be hum-less, fan-less, and submerged. This journey into the fluid frontier is more than just a hardware upgrade; it is a fundamental change in how we manage the energy powering our digital future, making it more efficient, sustainable, and powerful.