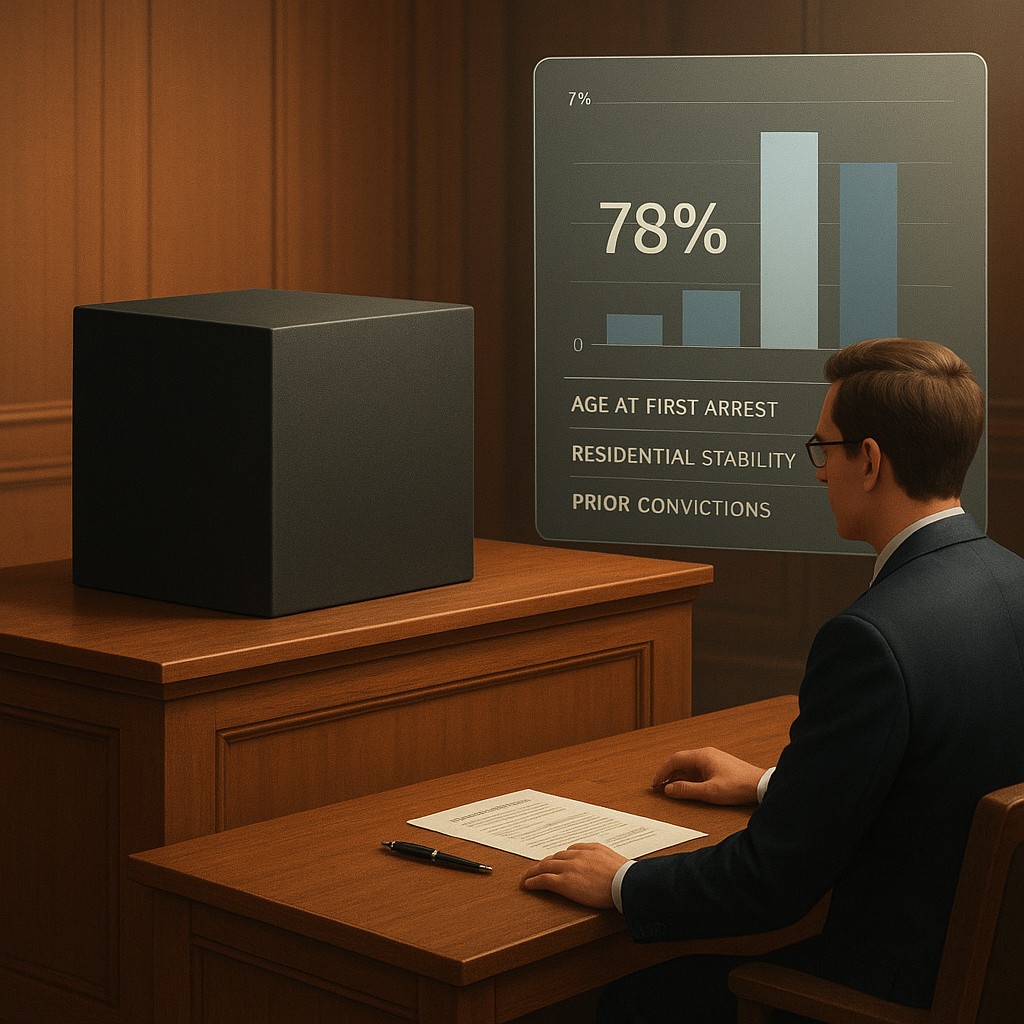

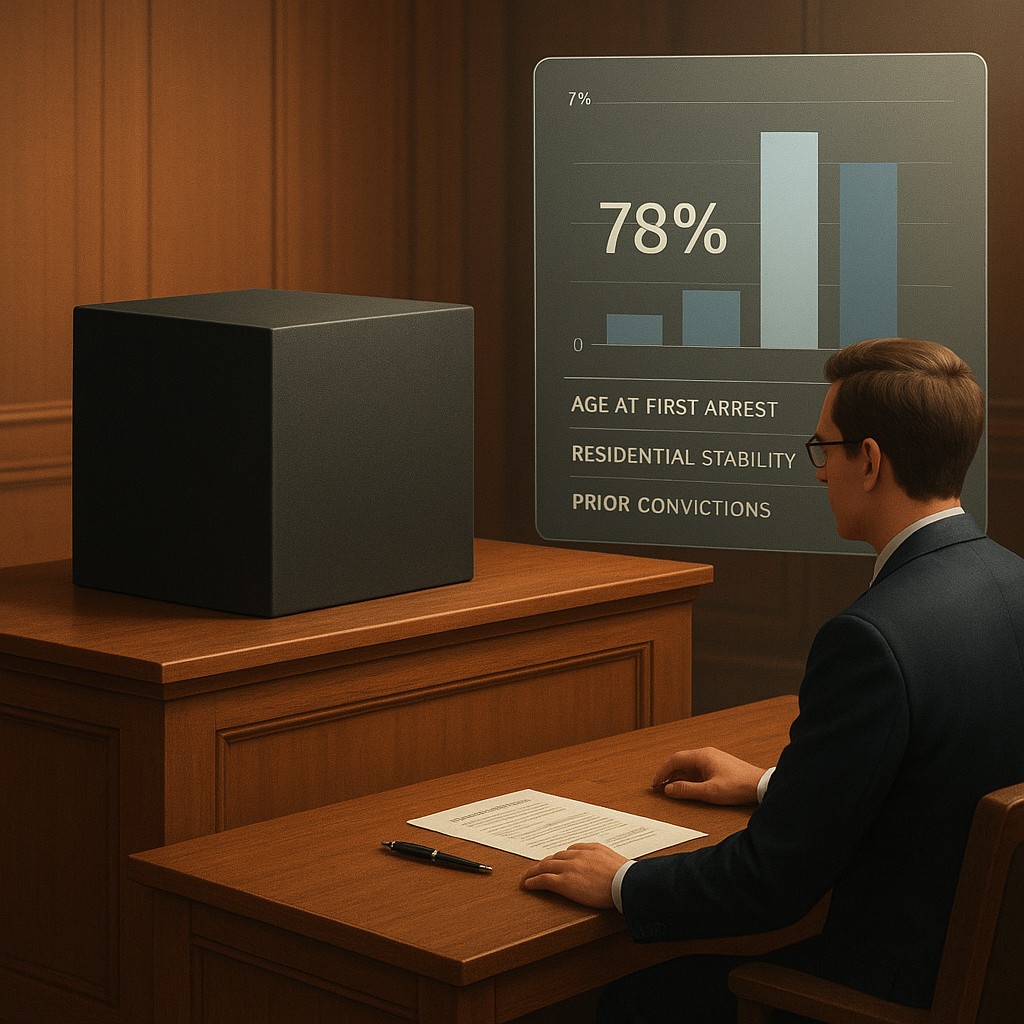

Imagine walking into a courtroom where the judge is not a person, but a sleek, silent black box sitting on the bench. You are there for a sentencing hearing, and the judge announces that based on a secret calculation, you have a 78 percent chance of committing another crime within the next two years. When your lawyer asks how that number was reached, the response is a legal shrug citing trade secrets and corporate privacy.

For years, this was not a scene from a science fiction novel, but a reality in many courtrooms around the world. Thousands of defendants have been sentenced with the help of risk assessment tools whose inner workings were guarded more closely than the recipe for a famous soda.

The tide is beginning to turn as legal systems move toward what experts call the "transparency era." Instead of just looking at a final score, new laws demand that the specific values assigned to every data point - from your age at your first arrest to whether you have a stable home - be explained in plain English. This shift is about more than just being open; it moves the legal battle from the software’s final answer to the ingredients of its recipe. By exposing these mathematical weights, we can finally see if the machine is using race or poverty as a hidden factor under the guise of neutral data.

The Secret Ingredient Problem in Modern Law

The primary tension in automated sentencing has always been the clash between corporate profit and the right to a fair trial. Private companies develop these tools, spending millions on data scientists and engineers to create predictive models. Naturally, they want to protect their investment, so they claim the specific formulas are trade secrets. However, the legal system relies on the principle that the accused has the right to challenge the evidence against them. If a machine says you are dangerous but your lawyer cannot see why, that fundamental right is effectively lost.

When we talk about "weights," we are talking about the "coefficient" of a variable - essentially, how much importance the computer gives to a specific piece of information. In simple terms, if a risk assessment asks ten questions, they are not all equal. A question about your age might be worth five points, while a question about graduating high school might only be worth one.

For a long time, the public only saw the final score. Now, the demand for clear disclosures means the software must reveal if it is, for example, using "number of moves in the last year" as a major predictor of crime. This allows a defense team to argue that the machine isn't actually predicting crime, but rather the instability that comes with being a low-income renter.

This movement toward transparency is less about making the math simpler and more about making bias visible. If an algorithm puts a high value on a "zip code," and that zip code belongs to a historically marginalized neighborhood, the algorithm is essentially laundering social bias through a digital filter. Without seeing these weights, people often assume the computer is being objective because it doesn't have feelings. In reality, a computer is just a mirror of the data it was fed. If that data is biased, the weights will reflect that bias with mathematical precision.

Breaking Down Risk Factor Weights

To understand why this shift matters, we have to look at how these tools actually work. Most of these algorithms use a method called "regression analysis," a statistical model used to find patterns. They look at historical data from thousands of people and try to find links between events. If the data shows that people first arrested at age sixteen are more likely to be arrested again than those first arrested at thirty, the machine assigns a "weight" to the age of the first arrest. The problem is that a link between two things does not mean one caused the other, yet the law often treats these weights as if they were proof of a person's character.

The shift toward showing these weights allows us to compare different tools and see what they truly value. Some tools might focus heavily on "static factors" - things you cannot change, like your past record. Others might include "dynamic factors," such as current employment or whether you are in a treatment program. By looking at the weights, we can determine if the justice system is using a tool that believes in rehabilitation or one that believes people are permanently defined by their worst day.

| Component |

Traditional "Black Box" Approach |

Emerging Transparency Model |

| Access to Logic |

Private, protected by trade secret laws. |

Mandatory disclosure of weights and variables. |

| Defense Strategy |

Challenging the accuracy of the final score. |

Challenging the specific importance given to factors. |

| Public Oversight |

Limited to high-level academic audits. |

Clear reports for community and public review. |

| Primary Goal |

Efficiency and predictive accuracy. |

Fairness, clarity, and legal accountability. |

| Bias Detection |

Studying unfair results after they happen. |

Finding biased inputs before they are used. |

The Illusion of Objectivity and Data Substitutes

A common misconception is that if we remove "race" from the list of questions, the tool becomes colorblind. This is what data scientists call the "proxy variable" problem. Even if the machine doesn't know a defendant's race, it might know their neighborhood, their education level, their income, and the age of their first interaction with police. In many societies, these factors are so closely tied to race due to historical issues that the algorithm ends up using them as a substitute.

By forcing transparency, we can see exactly how much emphasis a tool puts on these substitutes. If an algorithm gives significant weight to whether a person's parents were ever in prison, it is essentially punishing a child for the actions of their parents. In a traditional hearing, a judge who stated they were giving a longer sentence because of the defendant's parents would face an immediate appeal. However, when an algorithm does it silently, it often goes unnoticed. Transparency brings these silent judgments into the light of the courtroom where they can be debated.

It is also important to realize that transparency does not mean the algorithm is "fixed." A tool can be perfectly transparent and still be incredibly biased. The difference is that the bias is now a choice made by humans within the legal system rather than a hidden quirk of the software. If we see that a tool weights "unemployment" heavily, we can have a public debate about whether someone should stay in jail longer simply because they lost their job. Transparency transforms a technical problem into a moral and political one, which is exactly where it belongs in a democracy.

Challenging the Math in Court

For lawyers, the move toward transparency creates new ways to defend their clients. In the past, questioning an algorithm was nearly impossible. You couldn't put software on the witness stand and ask why it labeled a defendant "high risk."

Now, with access to the weights, defense attorneys can bring in their own experts to argue that the math is unsound or discriminatory. They can argue that the weight assigned to a specific factor is "arbitrary and capricious" - a legal way of saying the machine’s logic doesn't hold up.

This also changes the role of the judge. Instead of receiving a risk score of "8 out of 10" and taking it at face value, the judge can see the breakdown. They might see that the score is high primarily because of the defendant's youth, rather than a history of violence. This allows for a more careful application of justice. If the judge knows the "why" behind the score, they can decide if it aligns with the goals of sentencing, such as public safety or helping someone get their life back on track.

There is a risk that transparency could lead to people trying to "game the system." If everyone knows exactly how the score is calculated, some fear defendants might lie to lower their scores. While this is a concern, most data used in these tools comes from official records rather than self-reporting. Furthermore, the benefit of having a system that can be held accountable far outweighs the risk of someone trying to nudge their score down. The integrity of the justice system relies on the idea that rules are known and applied equally.

The Future of Explainable Justice

As we move forward, the focus will likely shift from simple transparency to "interpretability." It is one thing to see a list of numbers; it is another to understand how they work together in a complex model. The next generation of legal rules is pushing for "explainable AI," where the system must provide a written explanation for its decision. Instead of a spreadsheet, the court might receive a statement saying, "The risk score is high primarily due to the frequency of arrests in the last two years, despite the defendant having a steady job."

This evolution reflects a broader trend in how we use technology. We are moving away from the era of "the computer said so" and into an era of "show your work." This is vital in the justice system, where the stakes are not just a movie recommendation, but a human being's freedom. By stripping away the "black box" and exposing the machinery of sentencing, we are reclaiming the human element of judgment. We are acknowledging that while math can be a helpful tool, it should never have the final word in a court of law.

Ultimately, the goal of these transparency laws is to ensure that technology serves justice, rather than defining it. We must remain skeptical of any system that claims to predict human behavior with certainty. As we gain more insight into these algorithms, we gain the power to improve them, challenge them, and occasionally discard them when they fail to meet our human standards of fairness. Transparent weights are a victory for clarity, but they are only the beginning of the journey toward a truly fair digital legal system.

The shift toward algorithmic transparency invites us to be active participants in the digital age. It asks us to define what fairness looks like when it is translated into code. By understanding the weights that shape legal outcomes, we empower ourselves to demand a system that is as just as it is efficient. As you watch these laws evolve, remember that the most important part of any algorithm isn't the code, but the human values we choose to program into it.