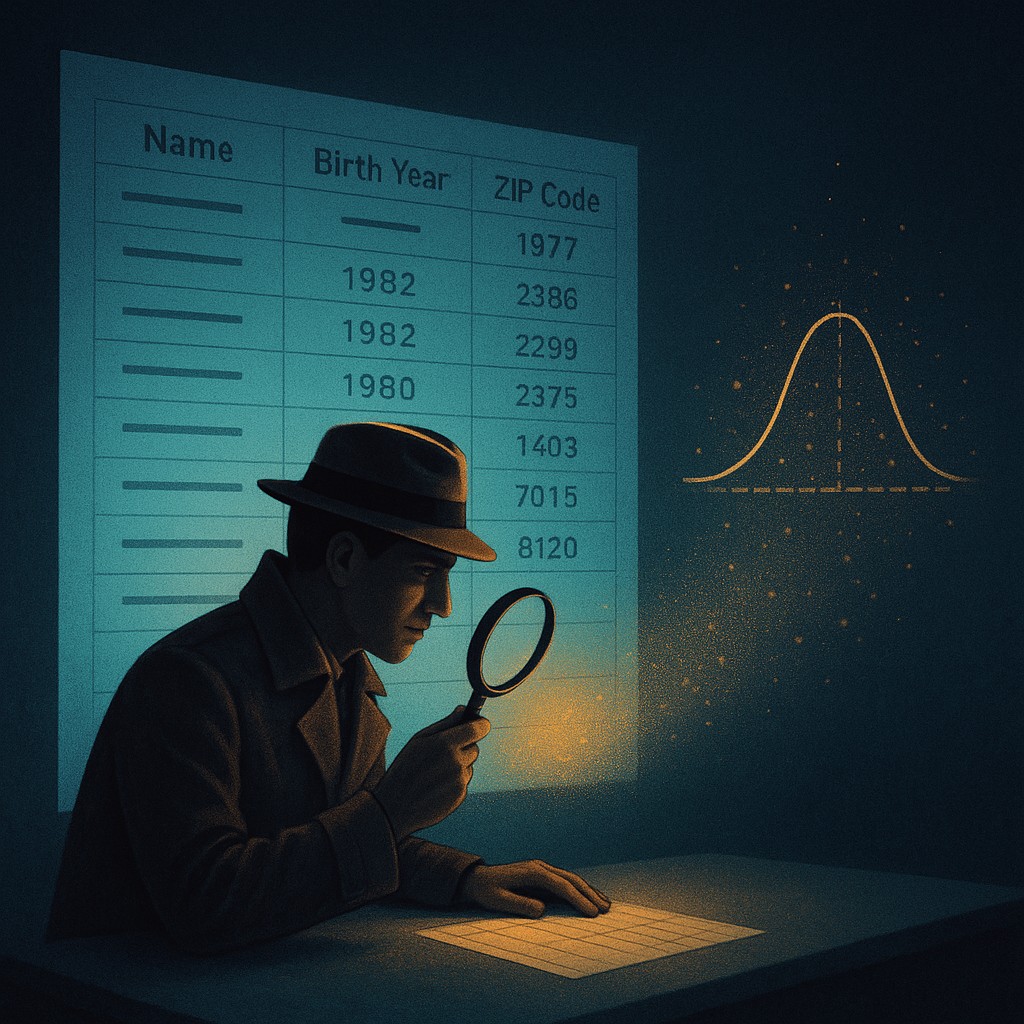

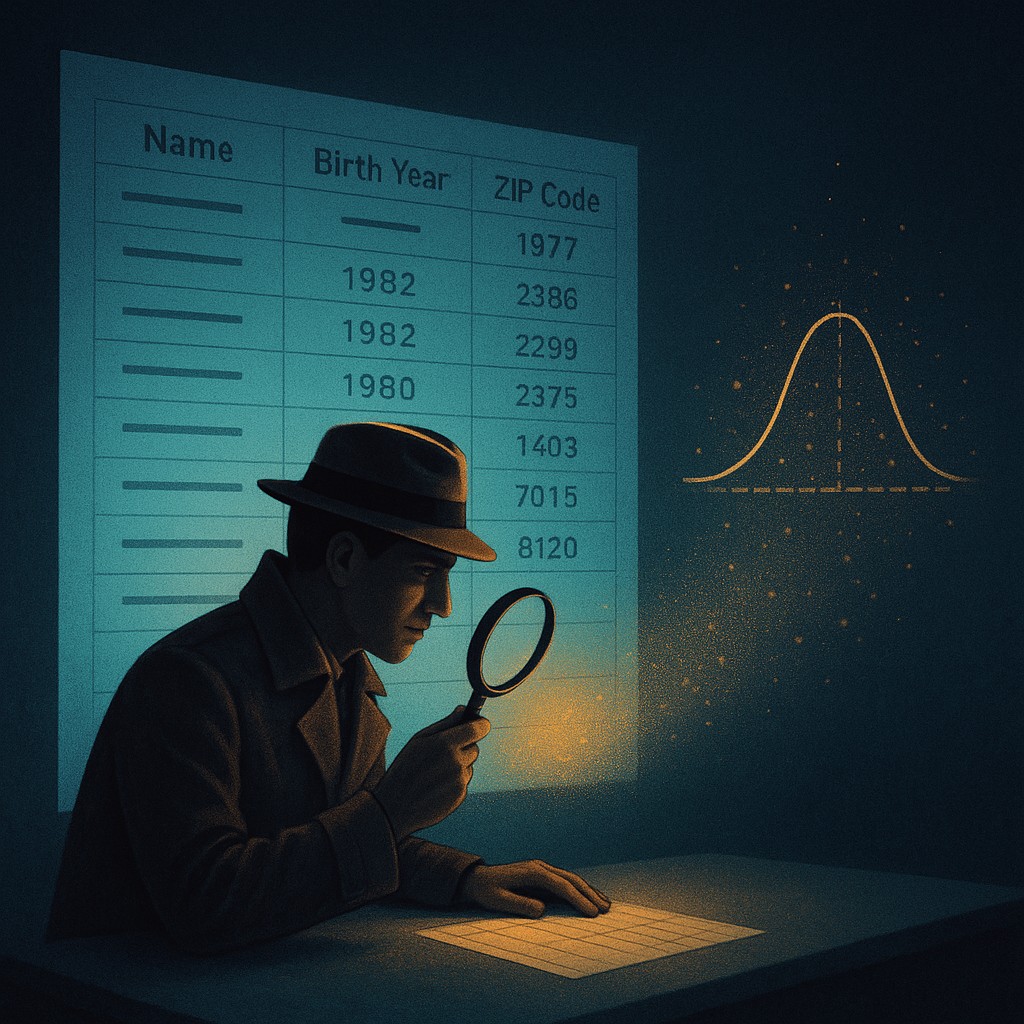

Imagine you are a detective trying to track down one person in a massive, "hidden" dataset. To protect them, their name has been deleted, their birthday has been cut down to just the birth year, and their home address has been swapped for a general zip code. You might think this person is invisible, but in modern data science, they are practically standing under a spotlight. By matching this "anonymized" list with public records like voter registrations or even movie review sites, a smart algorithm can re-identify people with startling accuracy. This is the great dilemma of the information age: we want to learn big-picture facts about groups without accidentally exposing the private lives of the individuals within them.

Traditional privacy methods are like trying to hide a face behind a thin piece of gauze; if you look from enough different angles, the features eventually become clear. This reality has led to the rise of a rigorous mathematical framework known as differential privacy. Instead of just deleting names, data scientists now inject a calculated amount of "mathematical noise" into datasets. It is like adding just enough static to a radio broadcast so you can still hear the song, but you cannot quite tell if the singer has a slight lisp. This technique ensures that no single person’s presence in a database can be proven, providing a mathematical shield that holds up even if an attacker has access to every other database in the world.

The Mosaic Effect and why traditional secrecy fails

For decades, the standard way to protect privacy was "de-identification." This involved stripping away what we call PII, or Personally Identifiable Information. If you removed Social Security numbers and names, the data was considered "safe." However, the "Mosaic Effect" shattered this illusion. Just as a mosaic is made of tiny, seemingly meaningless tiles that form a clear picture when viewed together, pieces of non-sensitive data can be combined to reveal a sensitive whole. If a database shows that a 42-year-old man in a specific zip code has a rare medical condition, and you know from LinkedIn that your neighbor is a 42-year-old man in that zip code, his medical secret has just become your lunch-break gossip.

This is not a theoretical fear. In one famous case, researchers identified individuals in an "anonymous" Netflix dataset by comparing movie ratings and dates to public reviews on IMDb. People who thought their quirky movie tastes were private suddenly found their entire viewing histories exposed. The lesson was clear: you cannot protect privacy by simply hiding a name; you have to protect the data itself from being too precise. Differential privacy was born from the realization that if an answer to a data query is 100 percent accurate, it is 100 percent likely to leak something about the people who provided the information.

How mathematical noise creates a cloak of invisibility

The heart of differential privacy is the injection of "noise." Imagine you are a teacher asking your students a very sensitive question, such as "Have you ever cheated on an exam?" If students answer directly, they risk their reputations. In a differentially private system, you would give each student a coin and tell them: "Flip the coin. If it comes up heads, tell the truth. If it comes up tails, flip it again; if the second flip is heads, say 'Yes,' and if it is tails, say 'No.'"

Because of the coin flips, a "Yes" answer no longer proves a student is a cheater. They might just be a victim of a tails-then-heads coin sequence. However, as the teacher, you know that 25 percent of the "Yes" answers are fake noise. By using simple algebra, you can subtract that noise to find the true percentage of cheaters in the room with remarkable accuracy. The group trend is revealed, but every individual student has "plausible deniability." This is the core of the technology: we add enough randomness so that any single person's data is essentially "lost in the noise", while the "signal" of the entire population remains clear.

The privacy budget and the tradeoff of truth

One of the most elegant parts of differential privacy is that it allows us to measure exactly how much privacy we are losing. This is managed through a variable known as "epsilon." You can think of epsilon as a "privacy budget." Whenever a researcher asks a question of a dataset, they "spend" a little bit of that budget. A low epsilon means a lot of noise is added, providing heavy privacy but less precise answers. A high epsilon means less noise is added, providing very accurate data but weaker privacy.

Once the privacy budget is spent, the dataset must be retired or locked away. This is because every additional question allows an observer to slowly "average out" the noise and see the true data underneath. This forced honesty is a revolution in data ethics. In the past, companies would claim data was "private" without any way to prove it. With differential privacy, they can point to the epsilon value and prove, with a mathematical guarantee, the maximum amount of information that could possibly have leaked. It turns out that in the world of data, secrecy is a finite resource that must be spent wisely.

| Concept |

Traditional Anonymization |

Differential Privacy |

| Core Method |

Removing names and IDs |

Adding mathematical noise |

| Risk Factor |

High (Mosaic Effect/Linking) |

Low (Mathematically Proven) |

| Accuracy |

High, until breached |

Adjusted via "Privacy Budget" |

| Guarantee |

Built on trust/Vague |

Formal and quantifiable |

| Data Utility |

High, but risky |

Tunable based on needs |

Implementing guardrails in the real world

You might be surprised to learn that you are likely already part of a differentially private system. Tech giants like Apple and Google use these algorithms to see which emojis are trending or which websites are draining phone batteries. Rather than seeing exactly what you do, they receive a "noisy" version of your activity. When millions of users send this noisy data, the individual "errors" cancel each other out, leaving the company with a crystal-clear picture of global trends without ever knowing your specific habits. This allows for better products without the creepy surveillance.

The 2020 U.S. Census also famously adopted differential privacy to protect citizens' identities. Because the Census is used to distribute billions of dollars and redraw political districts, the data must be accurate. However, the law also mandates that individual responses remain confidential for 72 years. By using differential privacy, the Census Bureau can release detailed demographic maps while ensuring that a nosy neighbor cannot use the data to figure out the income or household size of the family living next door. It is a delicate balance between the government's need for truth and the human right to a private life.

Debunking the myth of "perfect" data

A common misconception is that differential privacy "corrupts" data or makes it less useful. Critics often argue that if the data isn't 100 percent accurate, it's "fake." This ignores the fact that all data contains some level of error, whether from typos, reporting mistakes, or outdated records. Differential privacy simply replaces accidental, unpredictable errors with intentional, controlled ones. In many cases, "noisy" data is actually more reliable because it prevents "overfitting," a common problem where analysts find patterns in a small group that do not actually exist in the real world.

Another myth is that you cannot do "real" science with differential privacy. In reality, modern statistical tools are perfectly capable of working with noisy data. Just as a digital camera uses software to remove "grain" from a low-light photo, data scientists use specialized algorithms to account for the noise in these datasets. We are learning that we do not need to know everything about everyone to know everything about US. We can understand the forest perfectly well without measuring every single vein on every single leaf.

The future of shared knowledge

As we move deeper into the age of artificial intelligence, the need for safe data sharing will only grow. AI models require mountains of data to learn, but those mountains often contain sensitive medical records, financial histories, and private messages. Differential privacy offers a bridge to a future where we can train life-saving medical AI on real patient data without ever risking the exposure of a single patient's diagnosis. It turns out that the secret to a more open and knowledgeable society isn't more transparency, but a more sophisticated way to handle shadows.

By embracing the beauty of mathematical noise, we are moving past a primitive "hide and seek" approach to privacy. We are building a world where information can be both a public good and a personal shield. This shift requires us to get comfortable with a little bit of uncertainty for the sake of a lot of security. As you navigate the digital landscape, take heart: some of the most brilliant minds in mathematics are working to ensure that while the world might learn from you, it will never truly "know" you in a way that can be used against you. Explore the digital world with curiosity, knowing that the "noise" you see is actually the sound of your freedom being protected.